Over the past 10 years, thanks to the method of so-called deep training, we have received the best artificial intelligence systems – for example, speech recognition on smartphones or the latest automatic Google translator. Deep training, in fact, has become a new trend in already known neural networks that have become fashionable and have been going on for more than 70 years. For the first time, neural networks were proposed by Warren McCullough and Walter Pitts in 1994, two researchers at the University of Chicago. In 1952, they moved to work at the Massachusetts Institute of Technology to lay the foundation for the first chair of cognitive science.

Neural networks were one of the main lines of research in both neurobiology and computer science until 1969, when, according to the legends, they were finished off by the mathematicians of the Massachusetts Institute of Technology Marvin Minsky and Seymour Papert, who a year later became co-leaders of the new artificial intelligence laboratory MIT.

Revival of this method survived in the 1980s, slightly disappeared in the first decade of the new century and with fanfare returned in the second, on the crest of the incredible development of graphics chips and their processing power.

“There is an opinion that ideas in science are like epidemics of viruses,” says Tomaso Poggio, professor of cognitive and brain sciences at MIT. “There are, apparently, five or six major strains of influenza viruses, and one of them returns with an enviable periodicity of 25 years. People get infected, get immunity and do not get sick for the next 25 years. Then a new generation appears, ready to be infected with the same strain of the virus. In science, people fall in love with the idea, it drives everyone crazy, then they kill it to death and get immunity to it – they get tired of it. Ideas should have a similar periodicity. ”

Important questions

Neural networks are a method of machine learning when a computer learns to perform certain tasks, analyzing training examples. As a rule, these examples are manually marked in advance. The object recognition system, for example, can absorb thousands of tagged images of cars, houses, coffee cups and so on, and then can find visual images in these images that consistently correlate with certain labels.

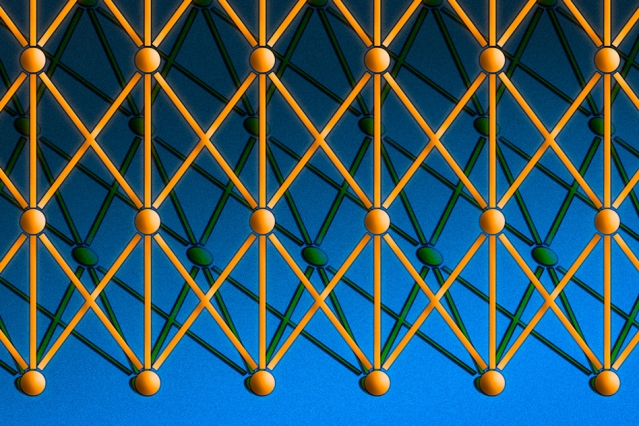

The neural network is often compared to the human brain, in which there are also such networks, consisting of thousands or millions of simple processing nodes that are closely related. Most modern neural networks are organized into layers of nodes, and data passes through them only in one direction. A single node can be associated with multiple nodes in the layer below it, from which it receives data, and several nodes in the layer above where it transmits the data.

To each of these incoming links, the node assigns a number – “weight”. When the network is active, the node receives different sets of data – different numbers – for each of these connections and multiplies by the corresponding weight. Then he summarizes the results, forming a single number. If this number is below the threshold, the node does not transfer data to the next layer. If the number exceeds the threshold, the node is “activated” by sending a number-the sum of the weighted input data-to all outgoing connections.

When the neural network is trained, all of its weights and threshold values are initially set in random order. The training data is fed into the lower layer – the input layer – and passes through the subsequent layers, multiplying and adding up in a complex way, until finally they arrive, already transformed, into the output layer. During training, the weights and threshold values are constantly adjusted until the training data with the same labels produce similar conclusions.

Mind and machines

The neural networks described by McCullough and Pitts in 1944 had both threshold values and weights, but they were not layer-by-layer, and scientists did not specify any specific training mechanism. But McCullough and Pitts have shown that a neural network could, in principle, calculate any function, like any digital computer. The result was more from the field of neurobiology than computer science: it was necessary to assume that the human brain can be considered as a computing device.

Neural networks continue to be a valuable tool for neurobiological research. For example, individual network layers or rules for adjusting weights and thresholds reproduced the observed features of human neuroanatomy and cognitive functions, and therefore touched on how the brain processes information.

The first trained neural network, Perceptron (or Perceptron), was demonstrated by Cornell University psychologist Frank Rosenblatt in 1957. The Perceptron design was similar to a modern neural network, except that it had one layer with adjustable weights and thresholds, sandwiched between the input and output layers.

“Perceptrons” were actively researched in psychology and computer science until 1959, when Minsky and Papert published a book called Perceptrons, which showed that the product of quite ordinary calculations on perceptrons was impractical in terms of time.

“Of course, all the restrictions seem to disappear if we make cars a little more complex,” for example, in two layers, “says Poggio. But at that time the book had a deterrent effect on the research of neural networks.

“These things are worth considering in a historical context,” says Poggio. “The proof was built for programming in languages such as Lisp. Not long before, people quietly used analog computers. It was not entirely clear at that time what programming would do at all. I think they slightly overdone, but, as always, you can not divide everything into black and white. If we consider this as a match between analog computation and digital computation, then they fought for what was needed. ”

Periodicity

By the 1980s, however, scientists developed algorithms to modify the weights of neural networks and thresholds that were effective enough for networks with more than one layer, eliminating many of the limitations defined by Minsk and Papert. This area survived the Renaissance.

But from a reasonable point of view, there was something missing in the neural networks. A sufficiently long training could lead to a revision of the network settings so long as it starts to classify the data in a useful way, but what does this mean? What features of the image does the object recognizer look like and how does it collect them in parts to form visual signatures of machines, houses and cups of coffee? Studying the weights of individual compounds will not give an answer to this question.

In recent years, computer scientists have begun to invent ingenious methods to determine the analytical strategies adopted by neural networks. But in the 1980s, the strategies of these networks were incomprehensible. Therefore, at the turn of the century, neural networks were replaced by vector machines, an alternative approach to machine learning based on pure and elegant mathematics.

The recent surge in interest in neural networks – the revolution of in-depth training – is indebted to the computer games industry. The complex graphic component and fast pace of modern video games require hardware that can keep up with the trend, resulting in a GPU (graphics processor) with thousands of relatively simple processing cores on a single chip. Very soon, scientists realized that the architecture of the graphics processor is perfectly suitable for neural networks.

Modern graphics processors made it possible to build networks of the 1960s and two- and three-layer networks of the 1980s into bouquets of 10-, 15-, and even 50-layer networks of today. That’s what the word “deep” in “deep training” answers. To the depth of the network. Currently, in-depth training is responsible for the most effective systems in almost all areas of artificial intelligence research.

Under the hood

The opacity of the networks is still troubling theorists, but there are advances on this front. Poggio directs the research program on the theoretical basis of intelligence. Not long ago, Poggio and his colleagues published a theoretical study of neural networks in three parts.

The first part, which was published last month in the International Journal of Automation and Computing, is addressed to the range of calculations that deep learning networks can carry out and to when deep networks have advantages over shallow ones. Part two and three, which were issued in the form of reports, are addressed to the problems of global optimization, that is, to ensure that the network will find the settings that best suit its training data, as well as cases where the network understands the specifics of its learning data so well, It can not generalize other manifestations of the same categories.

There are still many theoretical questions ahead, the answers to which will have to be given. But there is a hope that neural networks will finally be able to break the cycle of generations that plunge them into heat, then into cold.